![Acer Predator Triton 500 PT515-51-704N [15,6", Intel Core i7-9750H 2,6GHz, 16GB RAM, 1TB SSD, NVIDIA GeForce RTX 2080 Max-Q 8GB, Win 10 Home] schwarz gebraucht kaufen | Acer günstig auf buyZOXS.de Acer Predator Triton 500 PT515-51-704N [15,6", Intel Core i7-9750H 2,6GHz, 16GB RAM, 1TB SSD, NVIDIA GeForce RTX 2080 Max-Q 8GB, Win 10 Home] schwarz gebraucht kaufen | Acer günstig auf buyZOXS.de](https://www.zoxs.de/data/pic/10888451_org.jpg)

Acer Predator Triton 500 PT515-51-704N [15,6", Intel Core i7-9750H 2,6GHz, 16GB RAM, 1TB SSD, NVIDIA GeForce RTX 2080 Max-Q 8GB, Win 10 Home] schwarz gebraucht kaufen | Acer günstig auf buyZOXS.de

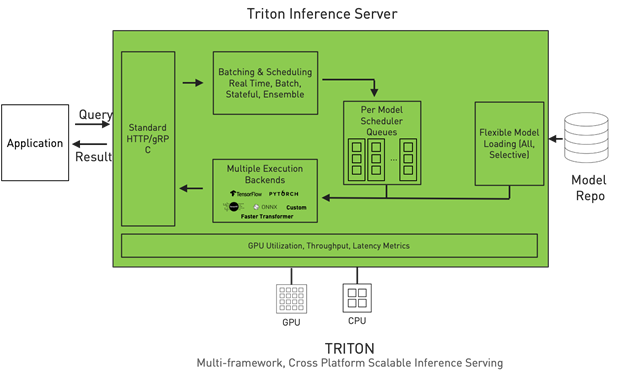

GTC 2020: Deep into Triton Inference Server: BERT Practical Deployment on NVIDIA GPU | NVIDIA Developer

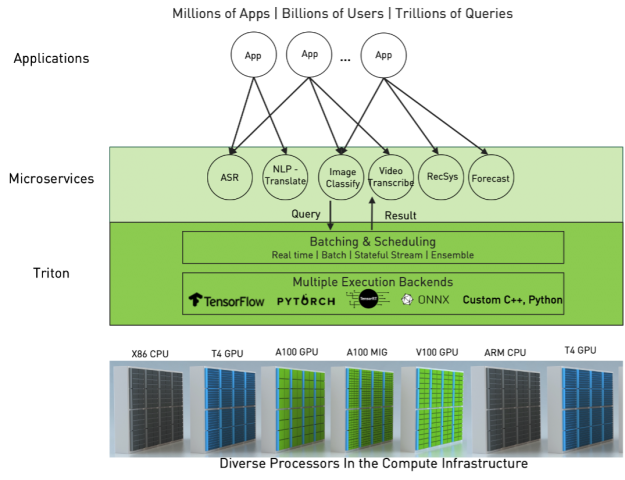

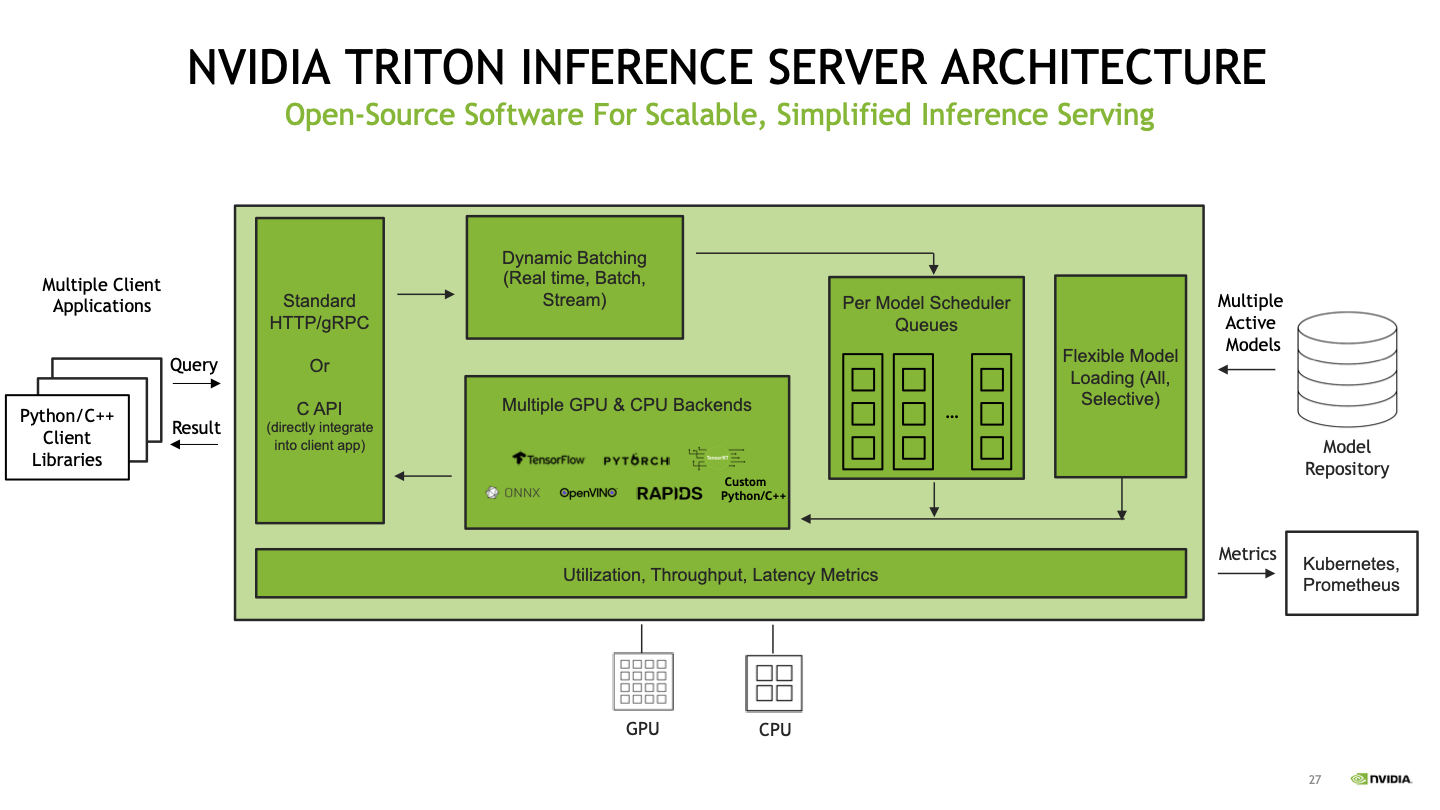

Optimizing Real-Time ML Inference with Nvidia Triton Inference Server | DataHour by Sharmili - YouTube

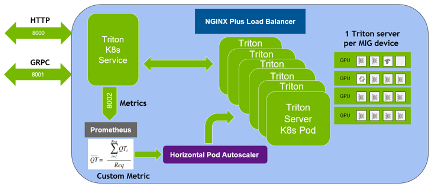

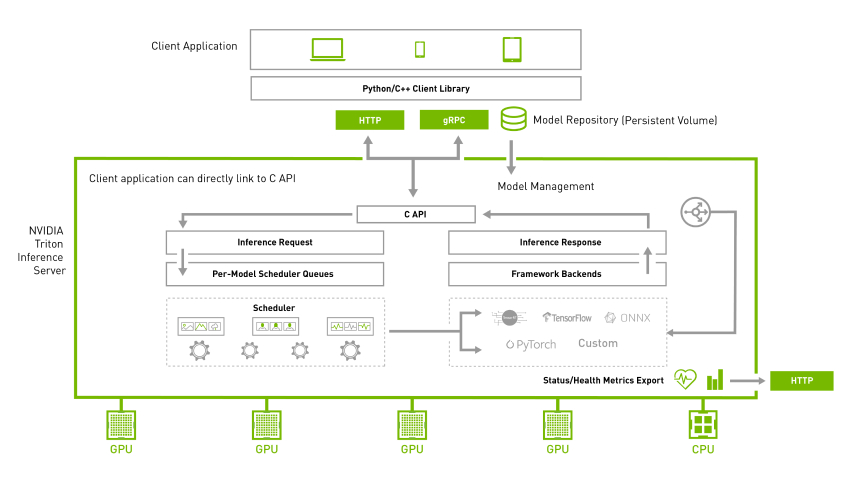

Achieve hyperscale performance for model serving using NVIDIA Triton Inference Server on Amazon SageMaker | AWS Machine Learning Blog

Achieve low-latency hosting for decision tree-based ML models on NVIDIA Triton Inference Server on Amazon SageMaker | AWS Machine Learning Blog

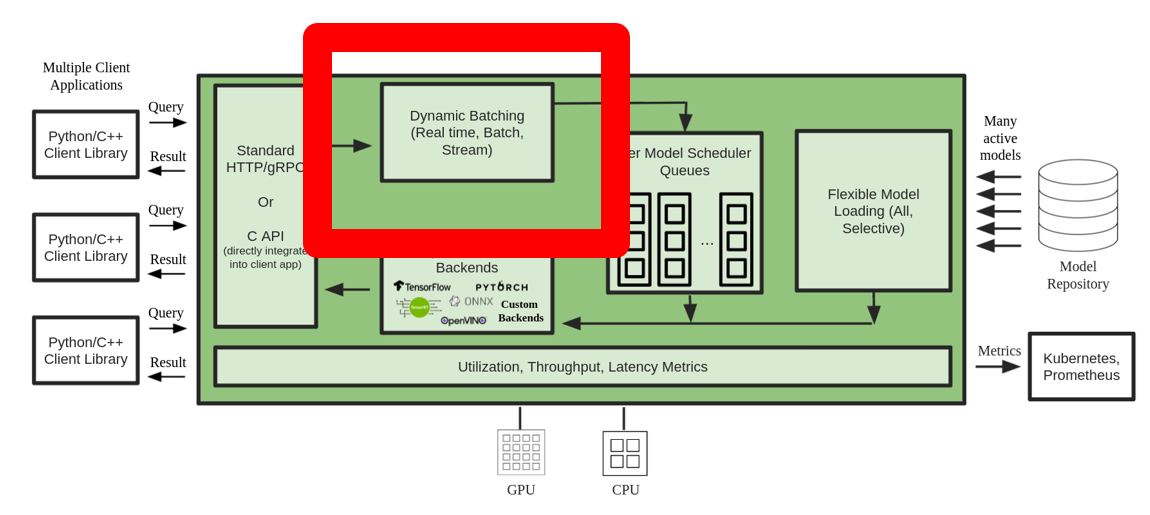

Running YOLO v5 on NVIDIA Triton Inference Server Episode 1 What is Triton Inference Server? - Semiconductor Business -Macnica,Inc.

Deploy fast and scalable AI with NVIDIA Triton Inference Server in Amazon SageMaker | AWS Machine Learning Blog

Running YOLO v5 on NVIDIA Triton Inference Server Episode 1 What is Triton Inference Server? - Semiconductor Business -Macnica,Inc.