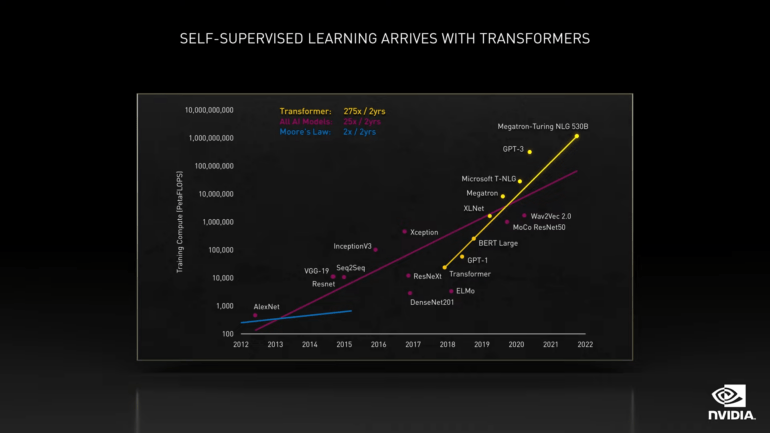

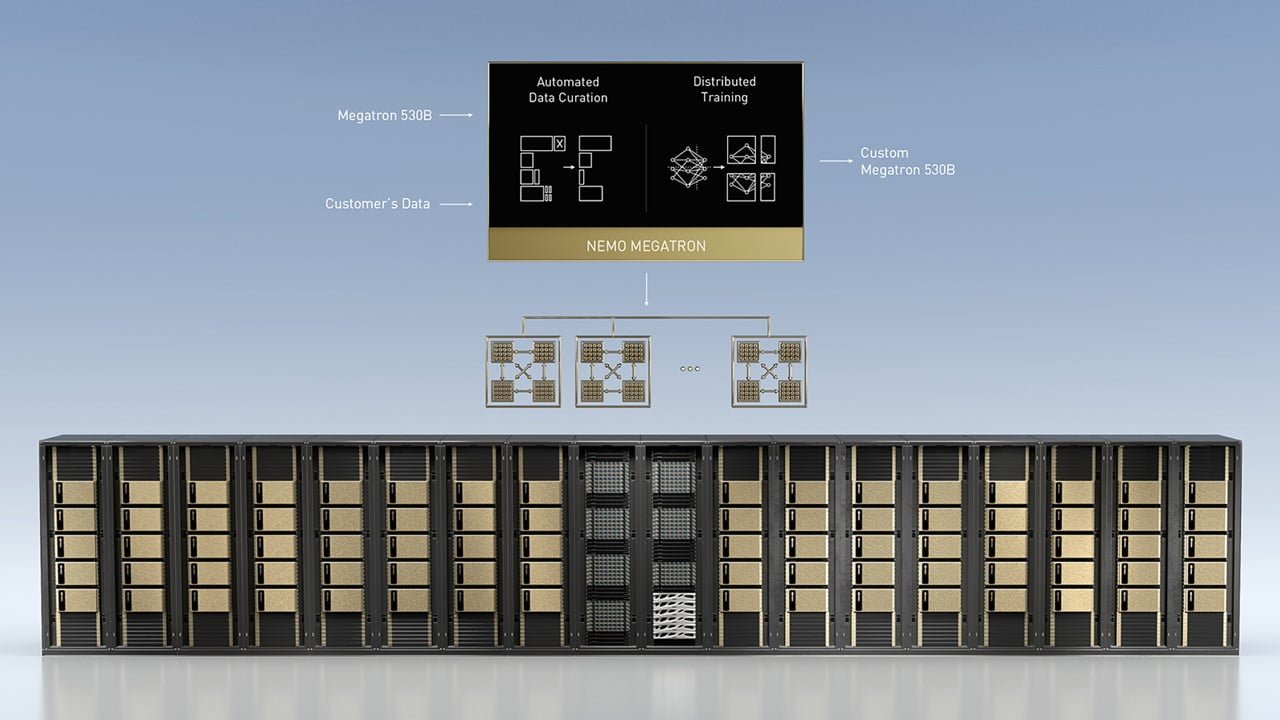

Using DeepSpeed and Megatron to Train Megatron-Turing NLG 530B, the World's Largest and Most Powerful Generative Language Model - Microsoft Research

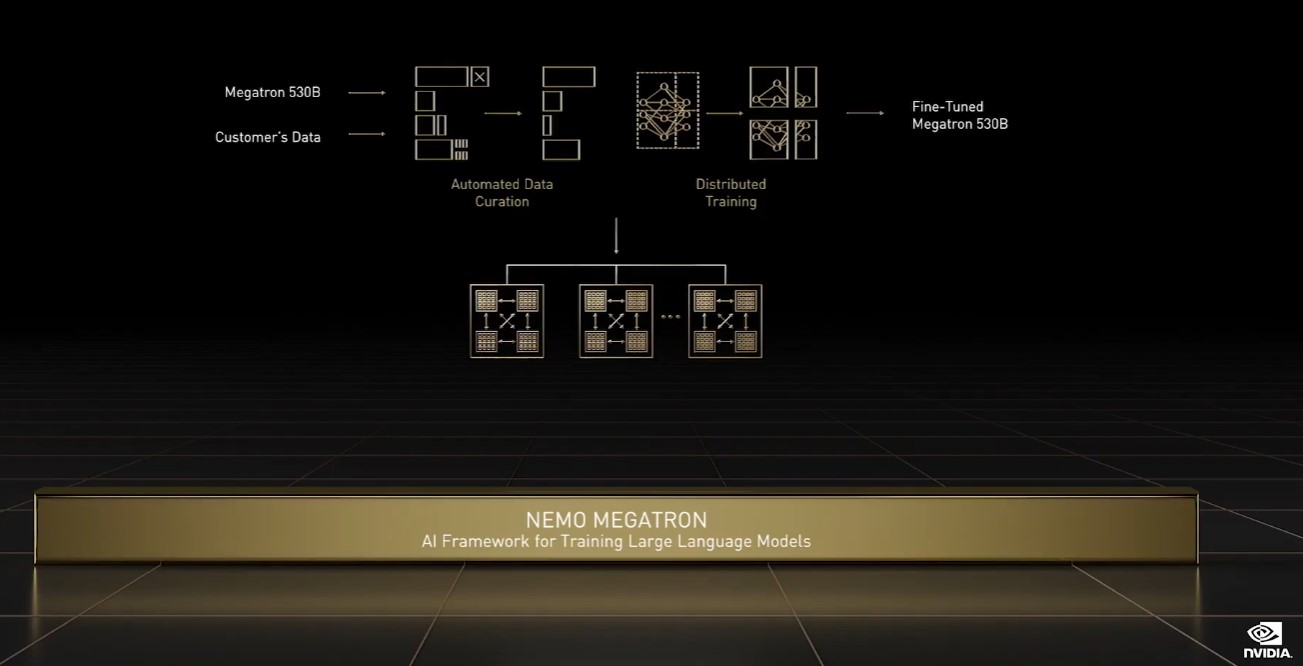

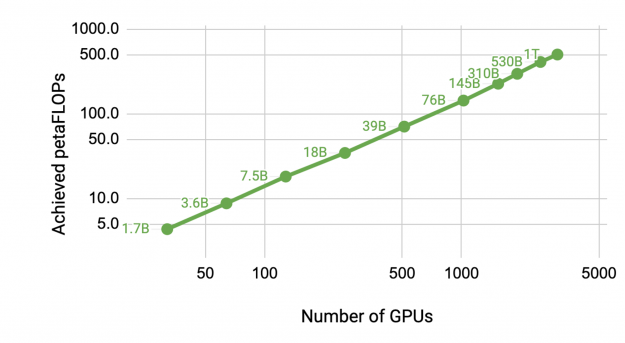

Nvidia Megatron: Not a robot in disguise, but a large language model that's getting faster | VentureBeat

Un'intelligenza artificiale è stata invitata a parlare di etica all'università di Oxford | Rolling Stone Italia

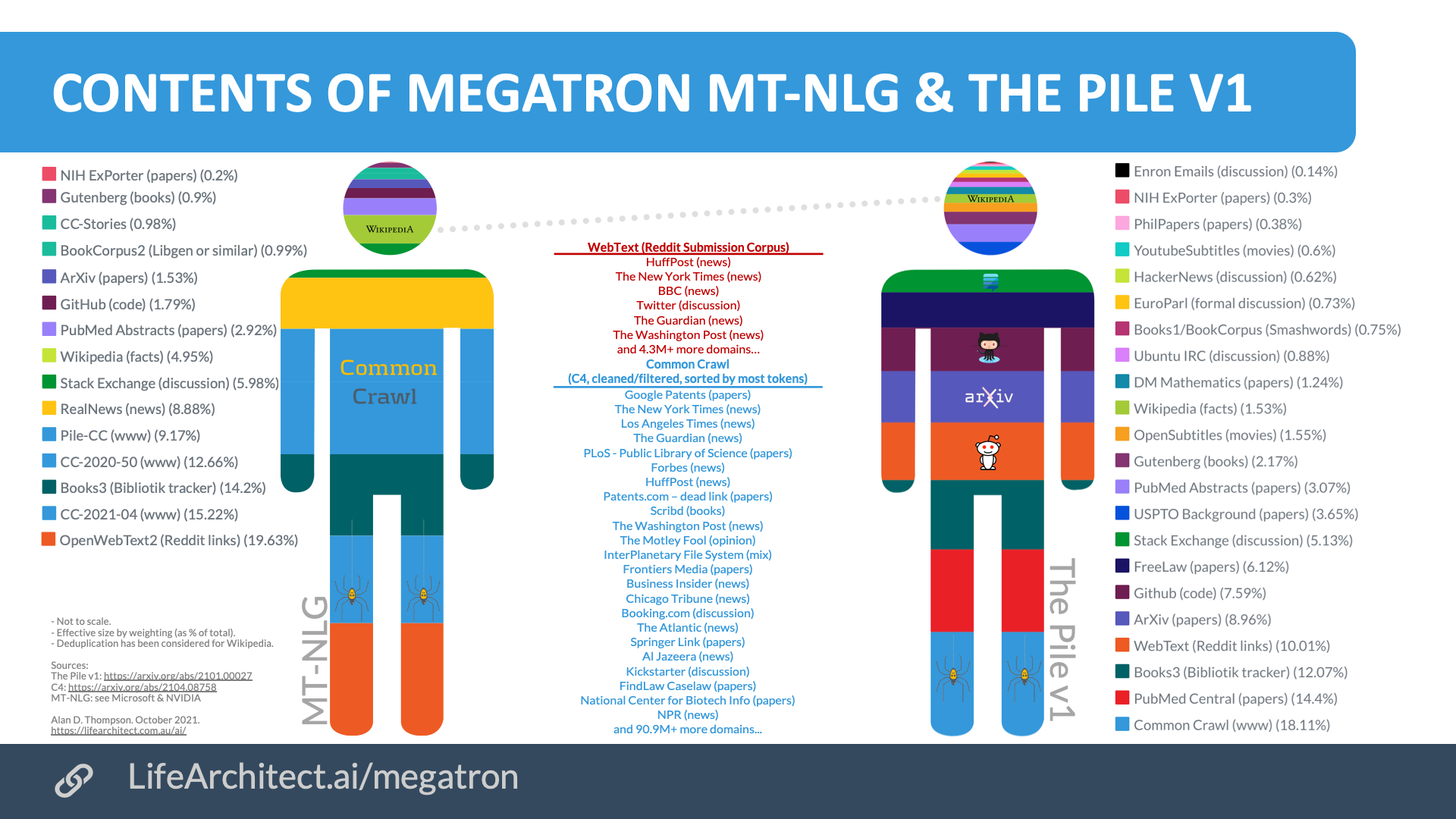

Microsoft & NVIDIA Leverage DeepSpeed and Megatron to Train Megatron-Turing NLG 530B, the World's Largest Monolithic Language Model | Synced

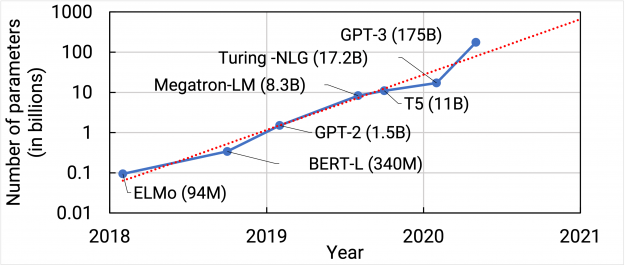

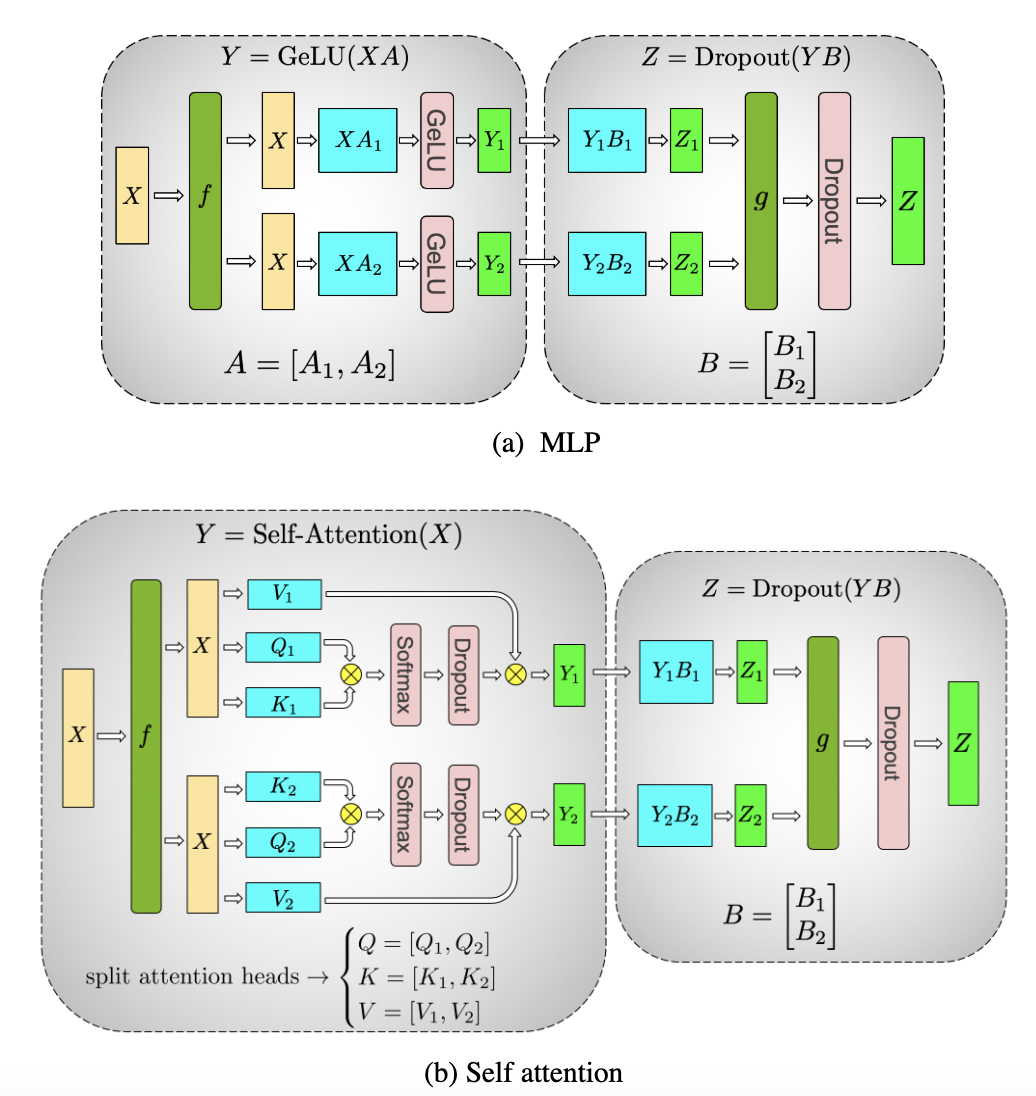

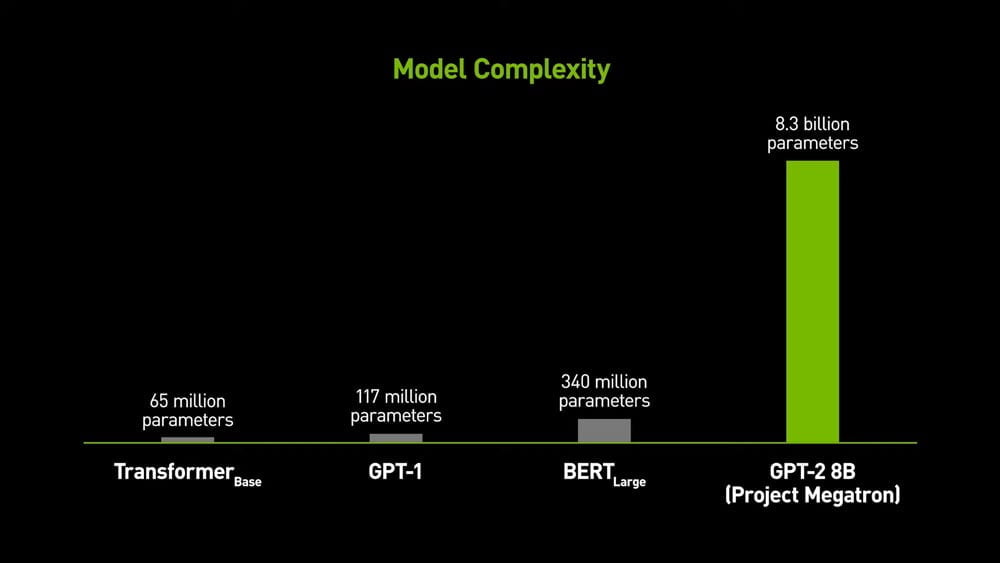

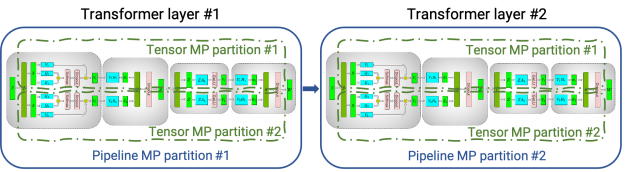

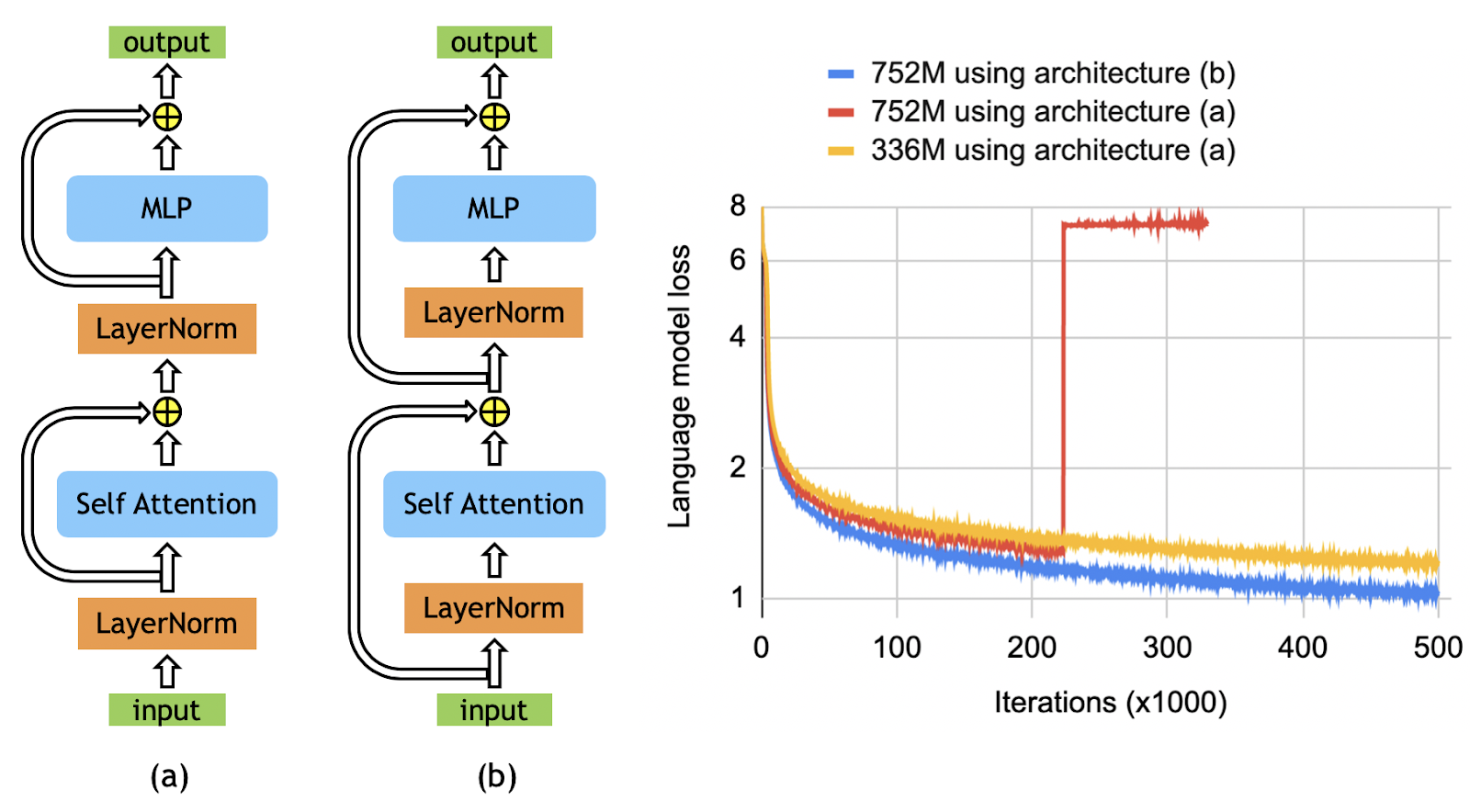

GTC 2020: Megatron-LM: Training Multi-Billion Parameter Language Models Using Model Parallelism | NVIDIA Developer