Deploying a PyTorch model with Triton Inference Server in 5 minutes | by Zabir Al Nazi Nabil | Medium

Triton server died before reaching ready state. Terminating Riva startup - Riva - NVIDIA Developer Forums

Failed with Jetson NX using tensorrt model and docker from nvcr.io/nvidia/ tritonserver:22.02-py3 · Issue #4050 · triton-inference-server/server · GitHub

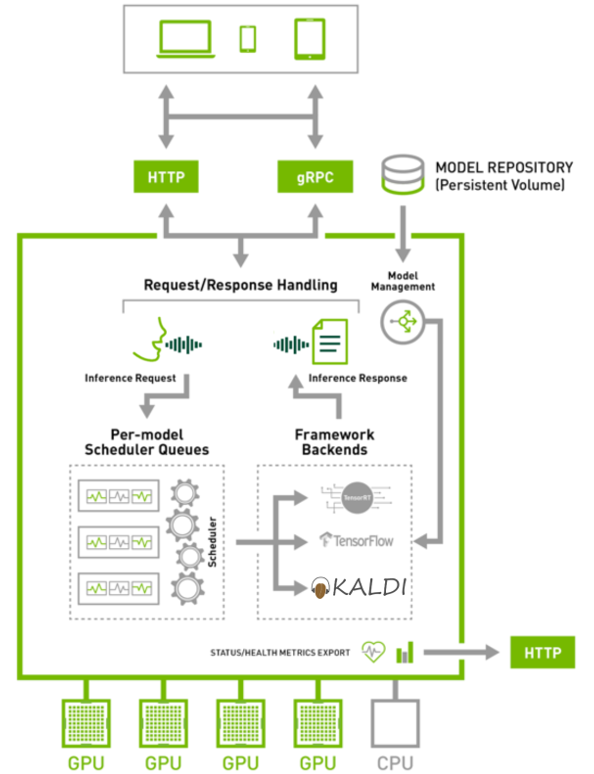

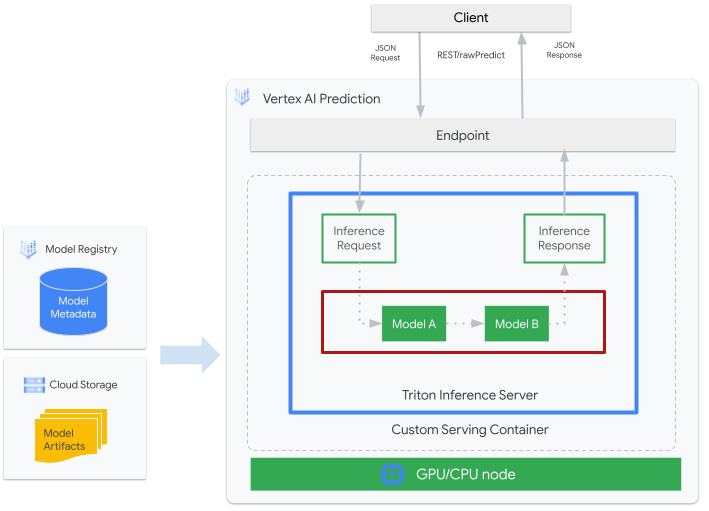

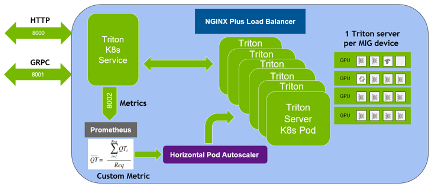

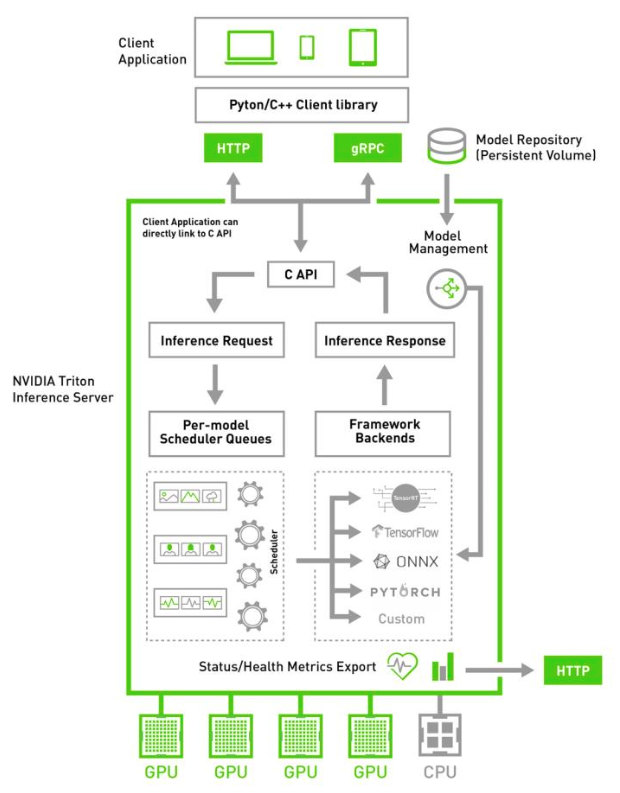

Simplifying AI Inference with NVIDIA Triton Inference Server from NVIDIA NGC | NVIDIA Technical Blog

Simplifying AI Inference with NVIDIA Triton Inference Server from NVIDIA NGC | NVIDIA Technical Blog

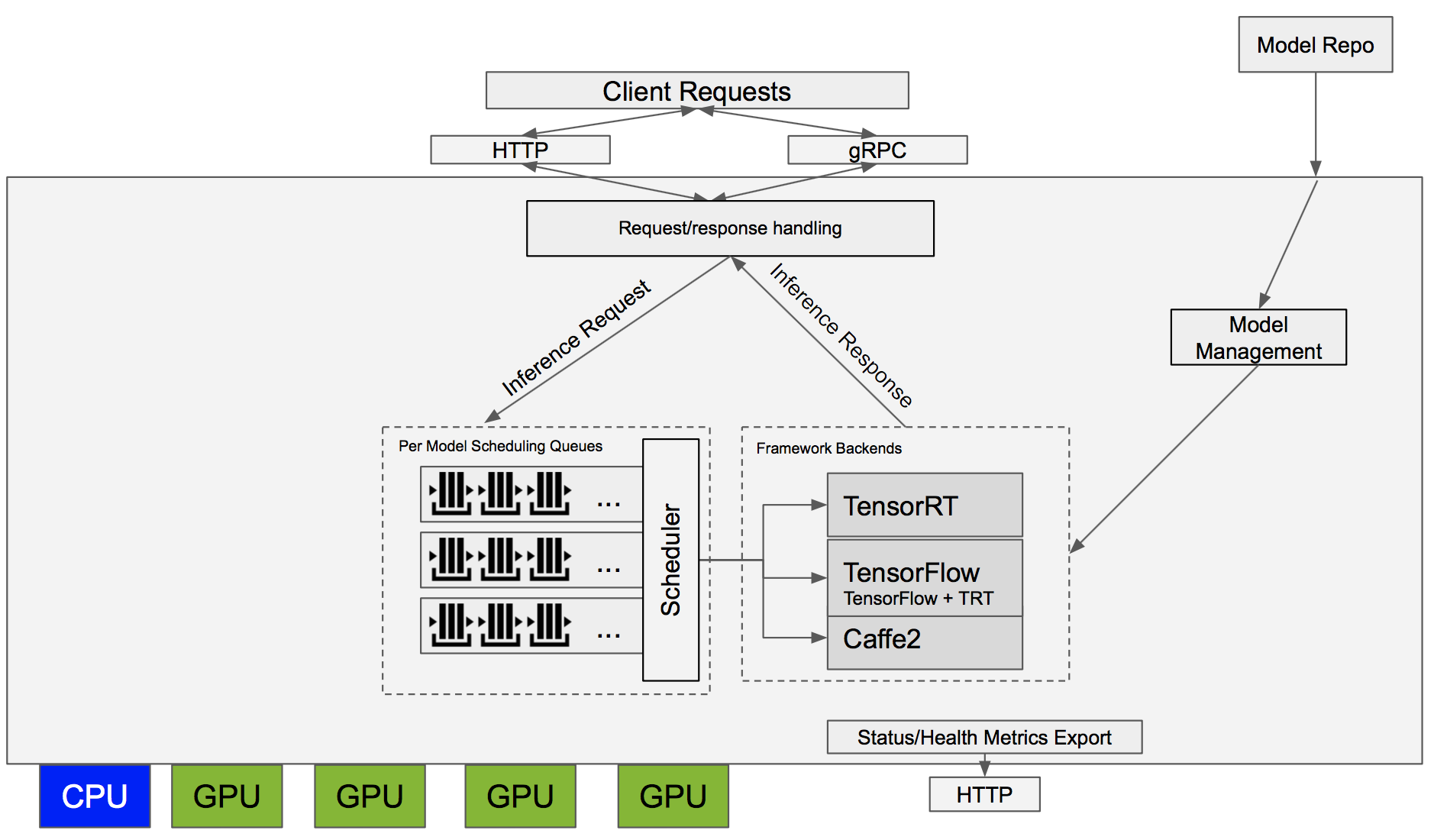

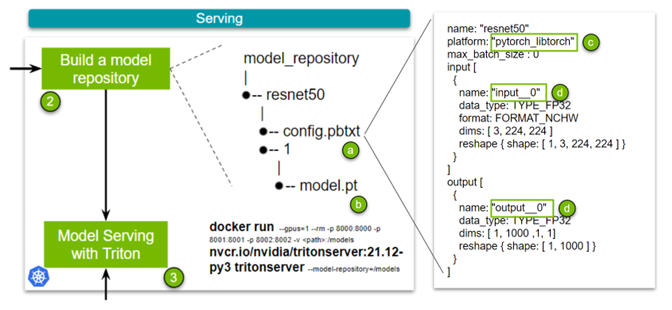

Deploying a PyTorch model with Triton Inference Server in 5 minutes | by Zabir Al Nazi Nabil | Medium

Triton server - required NVIDIA driver version vs CUDA minor version compatibility · Issue #3955 · triton-inference-server/server · GitHub

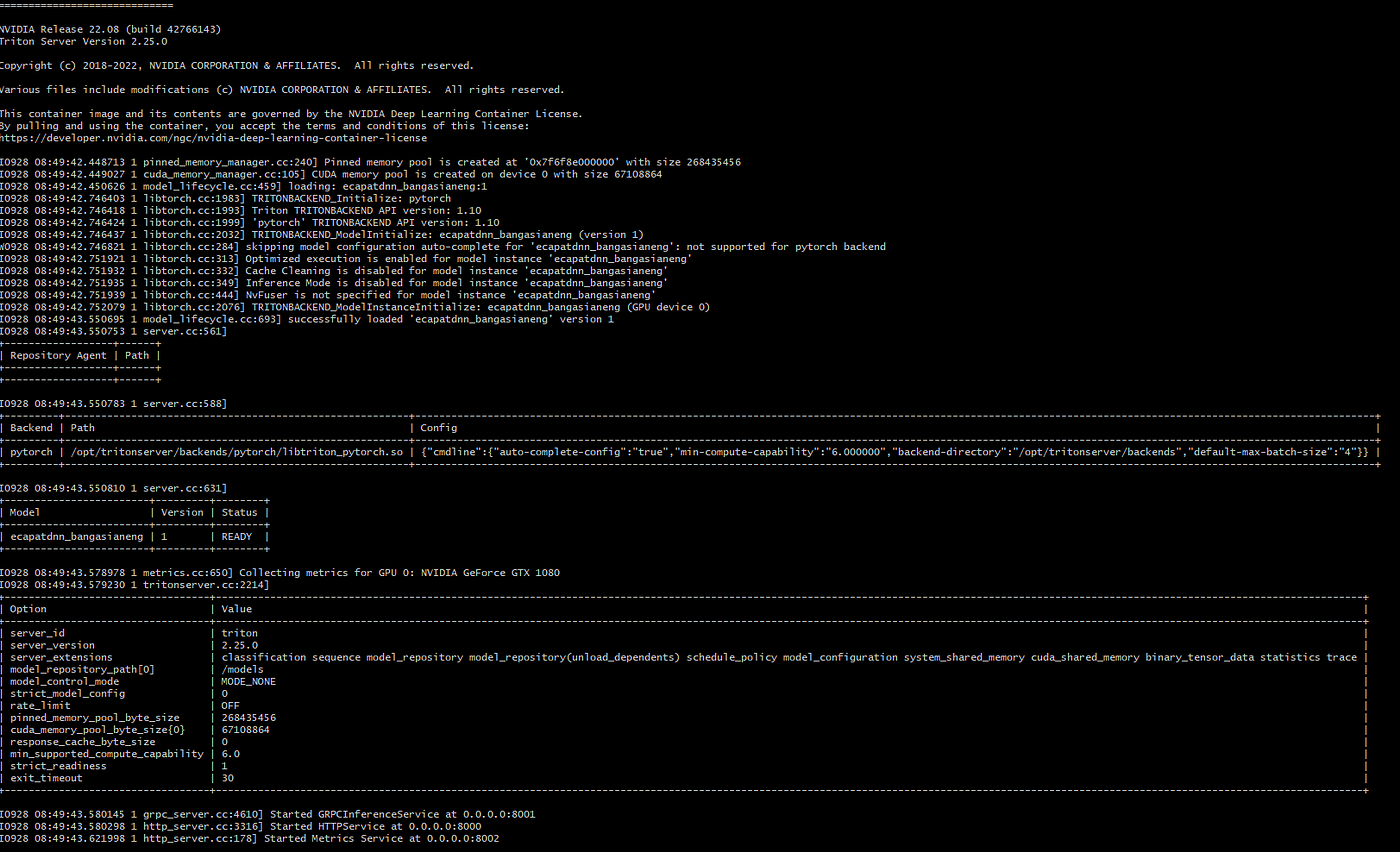

![Fastpitch/Pytorch] Multi GPU inferencing in a single triton server issue · Issue #1229 · NVIDIA/DeepLearningExamples · GitHub Fastpitch/Pytorch] Multi GPU inferencing in a single triton server issue · Issue #1229 · NVIDIA/DeepLearningExamples · GitHub](https://user-images.githubusercontent.com/15320876/204241762-027e893a-54dd-4c3f-ae8e-0fa3be06e6d9.png)

Fastpitch/Pytorch] Multi GPU inferencing in a single triton server issue · Issue #1229 · NVIDIA/DeepLearningExamples · GitHub

Failed with Jetson NX using tensorrt model and docker from nvcr.io/nvidia/ tritonserver:22.02-py3 · Issue #4050 · triton-inference-server/server · GitHub